Control symbols now inserted on macro

-

Hi, @oasisindesert, @peterjones, @alan-kilborn, @ekopalypse and all

Peter, by rereading your last post, this morning, you said :

I just took Guy’s edition, and ran his macro once in a new file with Encoding > ANSI set, and it worked as Guy described. Then I made a new file, set Encoding > UTF-8, and the bug showed itself – same load of the program, same shortcuts.xml.

May be, I’m missing something obvious !

Opening N++ with the archive that I sent you, the default encoding of

new 1isUTF-8But, after numerous tests, using, first, any option of the

Encodingmenu and, even, the optionsEncoding > Character Set > ... > from Windows-1250 to Windows-1258, which stand for the mainANSIencodings, then running the macro, I’ve never noticed any control character wrongly inserted !?So, I suppose that the main point is to have an old

32-bitoperating system and, therefore, to use the32 bitsversion of N++, as well. In that case, the bug never seems to be happening !Best Regards

guy038

-

So, to summarize, the current state is that

32bit version is not affected at all

64bit version works with document codec set to one of the 8bit encodings

and the troublesome is

64bit version with documents having unicode encoding.Which leads to the assumption that macros working on a unicode text

with 64bit npp version has a bug. -

@Ekopalypse said in Control symbols now inserted on macro:

32bit version is not affected at all

I disagree. As described above, by manually setting the Encoding > UTF-8 toggle, I replicated the bug in 7.8.1-32bit

-

Not sure if this is helpful in this case but using

Linux and Wine I don’t see the problem on a 64bit version at all.Notepad++ v7.8.1 (64-bit)

Build time : Oct 27 2019 - 22:57:19

Path : Z:\home…\notepad-plus-plus\notepad-plus-plus.exe

Admin mode : ON

Local Conf mode : ON

OS Name : Microsoft Windows 7 (64-bit)

OS Build : 7601.0

WINE : 3.0.4

Plugins : mimeTools.dll NppConverter.dll NppExport.dll

-

@Ekopalypse : weird.

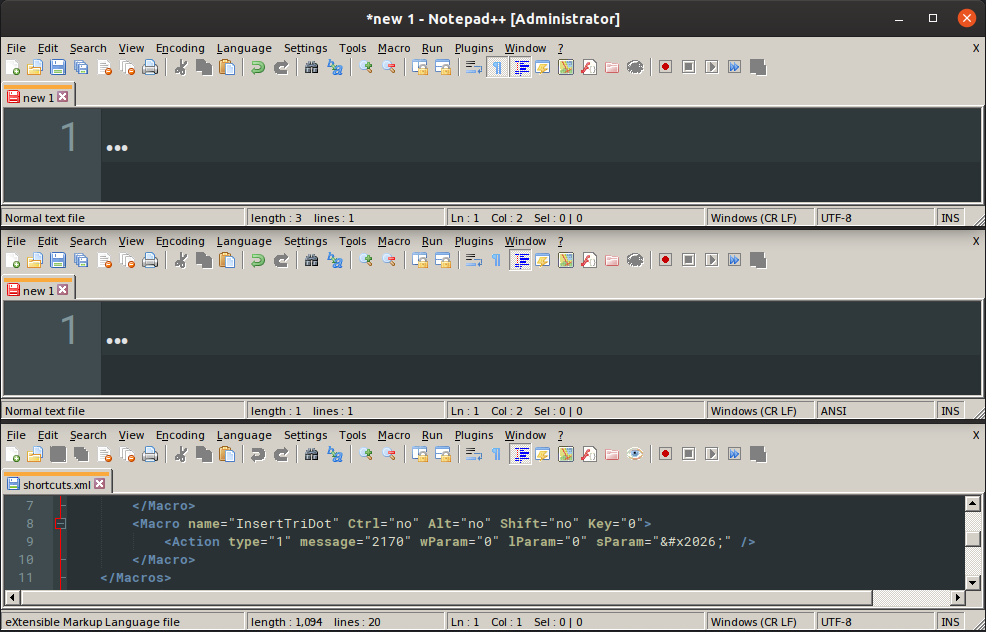

To re-confirm what I said earlier today, here’s a screenshot of the bug in action in NPP-32bit on Win10-64bit, showing encoding-dependency:

- @PeterJones: With both 7.8.1-64bit and 7.8.1-32bit on 64bit Win10 Home 1903 18362.476,

- Shows with Encoding > UTF-8 but not with Encoding > ANSI

- @guy038: In WinXP 32bit (@guy038), 7.8.1-32bit, it appears to not show the bug

- @Ekopalypse: In Linux WINE 3.0.4 with Guest=Win7-64bit 7601.0, NPP 7.8.1-64bit, the bug does not show in either UTF-8 or ANSI

Strange bug.

- @PeterJones: With both 7.8.1-64bit and 7.8.1-32bit on 64bit Win10 Home 1903 18362.476,

-

updated https://github.com/notepad-plus-plus/notepad-plus-plus/issues/7642 with the new screenshot and the summary of my experiments, @guy038 results, and @Ekopalypse results (ie, basically, copied my last post to github issue)

-

agreed - very strange. I’m wondering if XP and Wine share the same code

for the needed functionality is this case.

Would make some sense. Maybe some older unicode library or different than W7 and W10.

I thought about it for some time now, but I don’t see

how to start on this to track down the bug. -

@Ekopalypse There has been a problem with the definition of encodings for a very long time, sometimes it is solved but partially))

And then a new surprise appears with encodings)) -

@andrecool-68 said in Control symbols now inserted on macro:

There has been a problem with the definition of encodings for a very long time

:-) I agree but it has reached a new level I would say. :-(

I can live with limitation if I understand why their are,

but fishing in the dark for understanding drives me mad. -

@Ekopalypse This is no longer fishing … it’s sex with a concrete wall))

-

@andrecool-68

:-D ouch -

I finally was able to get this problem to replicate under debugger control (what I was missing before was that my file was coming up with ANSI encoding preselected – and I didn’t notice that; now when the debugger starts up I go in to the N++ Encoding menu and change it to UTF-8).

Here’s the problem:

void recordedMacroStep::PlayBack(Window* pNotepad, ScintillaEditView *pEditView) { if (_macroType == mtMenuCommand) { ::SendMessage(pNotepad->getHSelf(), WM_COMMAND, _wParameter, 0); } else { // Ensure it's macroable message before send it if (!isMacroable()) return; if (_macroType == mtUseSParameter) { char ansiBuffer[3]; ::WideCharToMultiByte(static_cast<UINT>(pEditView->execute(SCI_GETCODEPAGE)), 0, _sParameter.c_str(), -1, ansiBuffer, 3, NULL, NULL);The

::WideCharToMultiBytecall returns 0 – indicating failure – and then a call toGetLastErrorreturns 122 which isERROR_INSUFFICIENT_BUFFER.Indeed, the size of

ansiBuffer, i.e., 3, is one too small for this case.Changing it to 4 cures the problem for the UTF-8 files:

char ansiBuffer[4]; ::WideCharToMultiByte(static_cast<UINT>(pEditView->execute(SCI_GETCODEPAGE)), 0, _sParameter.c_str(), -1, ansiBuffer, 4, NULL, NULL); -

@Alan-Kilborn said in Control symbols now inserted on macro:

recordedMacroStep::PlayBack

…ansiBuffer[4];That makes some sense. At first I was wondering what change prompted that to start failing, and the GitHub blame shows those two lines haven’t been changed in 3-4 years. But really, if Scintilla changed the way it’s doing things, maybe the

_sParameter.c_str()now has a 4byte char rather than a 3byte char, or something.Alternately, MS Docs: WideCharToMultiByte shows that sending

cbMultiByte(the 3 or 4 in the last arg before the NULLs) as a 0 will have it return the width needed, so wouldn’t it be more future proof to use:int ansiBufferLength = ::WideCharToMultiByte(static_cast<UINT>(pEditView->execute(SCI_GETCODEPAGE)), 0, _sParameter.c_str(), -1, ansiBuffer, 0, NULL, NULL); // grab needed ansiBuffer length ::WideCharToMultiByte(static_cast<UINT>(pEditView->execute(SCI_GETCODEPAGE)), 0, _sParameter.c_str(), -1, ansiBuffer, ansiBufferLength, NULL, NULL);(though you’d need to either re-dim ansiBuffer, or make sure it’s always got enough room)